What Happens When Your Board Asks Who Authorized That Agent?

78% of organizations have no answer. Yours might be one of them.

The teams furthest ahead on agentic AI security right now are not the ones with the most sophisticated models or the largest security budgets — they are the ones that treated agent governance as an architecture decision made before deployment, not a cleanup project started after the first incident.

What that looks like in practice is a specific question asked before any agent goes into production: when this agent acts outside its intended scope, how will we know, and what stops it? Most teams cannot answer that question for the agents already running in their environment, not because they are careless but because the deployment happened under pressure and the governance conversation happened AFTER, if it happened at all.

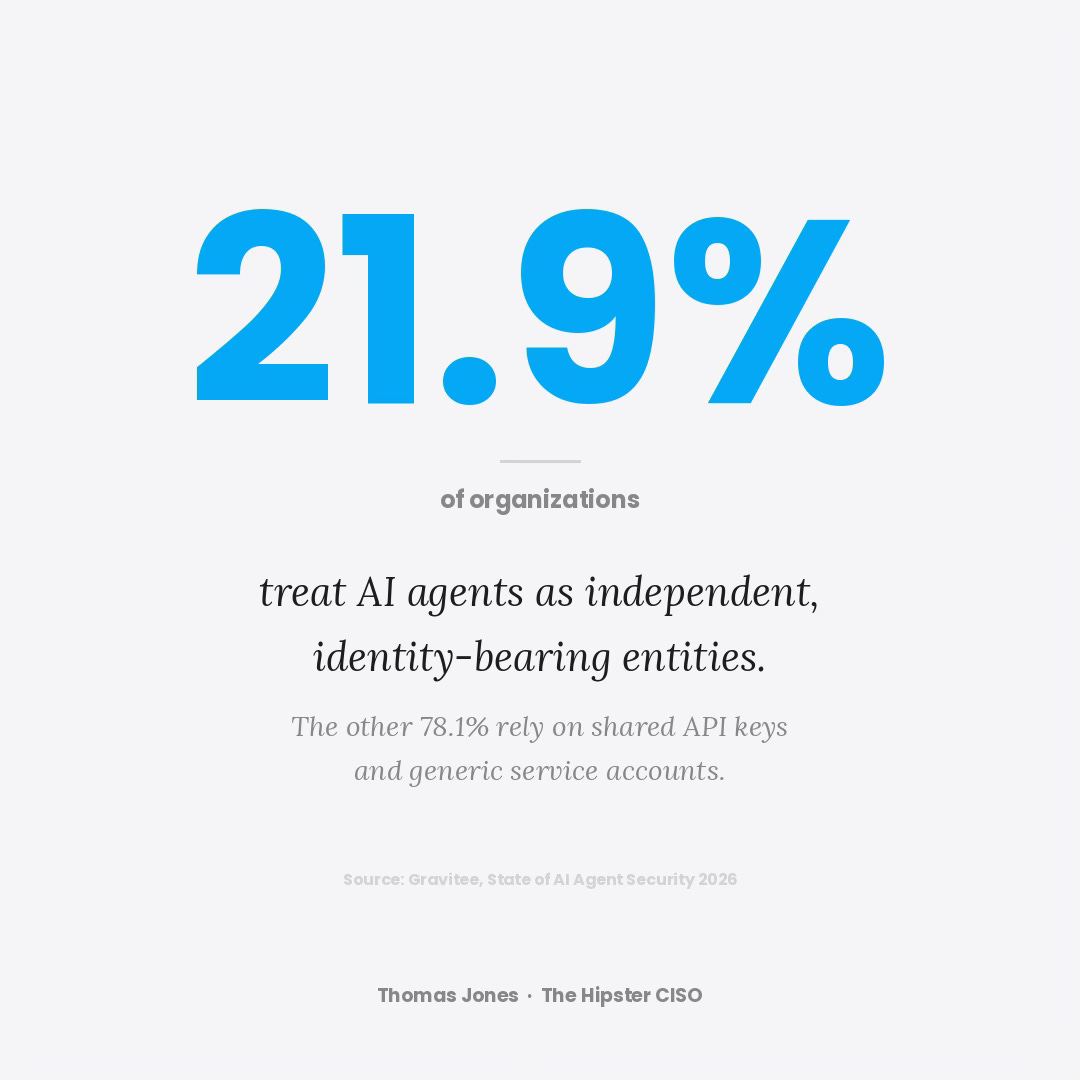

The Gravitee State of AI Agent Security report, published in February 2026 and drawing on 919 executives and practitioners, found that only 21.9% of organizations treat AI agents as independent, identity-bearing entities within their security model.1 The agents in the other 78.1% are authenticated through shared API keys and generic service accounts — persistent, unmonitored access pathways running continuously across production systems. The same research found that 88% of organizations had confirmed or suspected an AI agent security incident in the prior year.

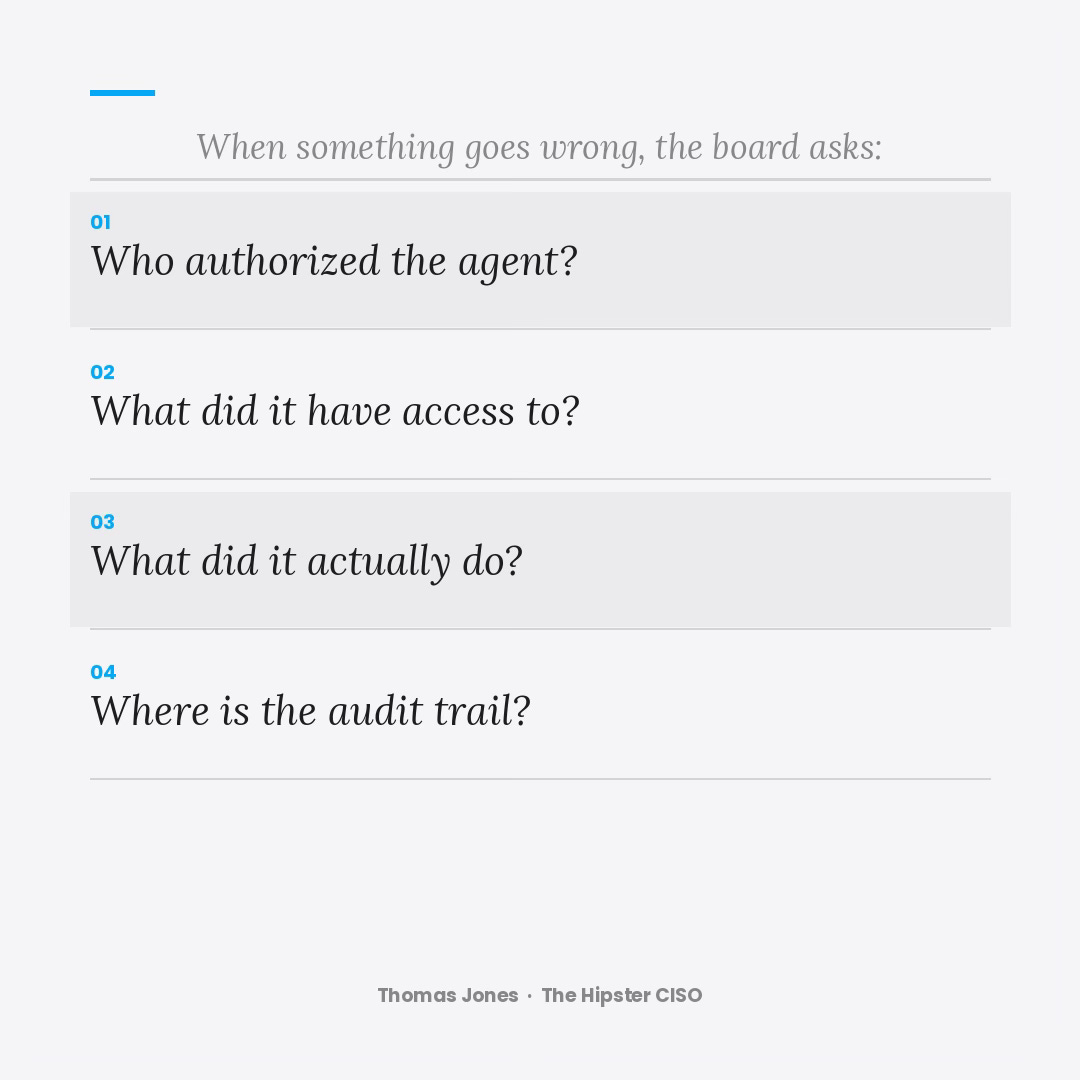

When something goes wrong in that environment, the question the board asks is not whether the model was safe. The question is who authorized the agent, what did it have access to, what did it actually do, and where is the audit trail — and those are very difficult questions to answer when the governance architecture was never built.

The agents are in production. Whether your organization can answer those four questions right now is worth knowing before you need to.

UPDATE 1: What I am now thinking as I write this though, is more questions I haven’t seen most formally work through yet.

If you were to actually threat model this problem — not conceptually, but rigorously, the way a serious security architecture exercise gets done — what portions of the enterprise are genuinely at risk?

Which business processes are now touching agent access pathways they didn’t have six months ago?

What does the revenue-at-risk calculation look like when you map agent permissions against your most critical systems?

And what does the tail look like — not the median incident, but the scenario where an unmonitored agent with shared credentials and no audit trail gets manipulated in exactly the wrong way at exactly the wrong time?

I honestly don’t think most organizations have run that exercise. I’m not sure most understand they have the instrumentation to run it today — the rapid knowledge graph module built by your’s truly. But the gap between “we have agents in production” and “we understand our exposure” is where the next significant incidents are going to come from — and the organizations that close it proactively are going to look very different from the ones that close it reactively.

Gravitee, State of AI Agent Security 2026, February 2026 — gravitee.io/state-of-ai-agent-security

“I honestly don’t think most organizations have run that exercise. I’m not sure most understand they have the instrumentation to run it today — the rapid knowledge graph module built by your’s truly. But the gap between “we have agents in production” and “we understand our exposure” is where the next significant incidents are going to come from — and the organizations that close it proactively are going to look very different from the ones that close it reactively”

this was an excellent point. the gap is real, and i agree, i don’t think enough orgs have a solid grasp on this.

great read!