The First Test in Carnegie Mellon's Data & AI Executive Program

...And Why 73% of AI Initiatives Fail Before Models Ever Run

TJ: If you are confronting epistemic governance problems in your organization where different people trust different versions of truth based on whose political interests the data supports, I would be genuinely interested in hearing what patterns you are seeing.

I am six weeks into Carnegie Mellon’s Chief Data & AI Officer executive program, and my team just received our first deliverable assignment—a fifteen-page project plan documenting company research, industry analysis, stakeholder mapping, and our approach to developing an enterprise data and AI strategy roadmap for the organization we selected.

The program is explicitly demanding rigor, which is appropriate given what these foundational assessments are meant to support. What I have learned across twenty years as a security leader, though, is that rigor requires more than thorough research execution—it requires constitutional governance over how truth gets established, how that truth gets tested adversarially, and how uncertainty gets preserved through synthesis instead of being systematically laundered into false confidence.

I have watched the same pattern destroy value mechanically in every organization I have worked with over the years. Let’s consider a few instances:

Your analysts discover through careful financial mapping that the largest customer segment generates genuinely negative unit economics. The CFO explains it fluently as strategic investment in market share that will deliver profitability at scale, and your analysis documents this as growth opportunity with margin expansion potential.

The same team identifies that sixty percent of revenue sits behind customer agreements containing change-of-control provisions. Business development assures everyone these clauses rarely trigger in practice, and your assessment codes this as customer concentration risk, manageable.

Technical diligence reveals the core platform requires fifty million dollars and eighteen months to migrate off the current hyperscaler. The CTO positions this as cloud-native architectural sophistication, and your deliverable describes it as strong technical foundation.

Structural fragility somehow became strategic positioning. Nobody fabricated data, but the analytical framework did exactly what it was designed to do: systematically remove uncertainty until observable constraints transformed into narrative elements supporting whatever direction leadership was already inclined to pursue.

The ever-present pattern where findings get shaped to support whatever narrative leadership already committed to, instead of letting the evidence determine which strategic options actually survive contact with reality.

This happens mechanically rather than through individual analytical failure. The problem is architectural.

When Feedback Loops Are Brutal and Compressed

My background and career track has forced me to develop different instincts because cybersecurity creates feedback loops that are both brutal and compressed. (TJ: My cardiologist and wife can both attest to this to no end.) When your threat model fundamentally mischaracterizes risk or your controls prove ineffective, breaches happen on timelines you do not control, production stops, and regulators arrive with enforcement authority that does not care about your explanations. You develop discipline around uncomfortable truths because the alternative is professional extinction.

I am expanding breadth and influence now through data and AI strategy frameworks where feedback loops stretch across quarters or years instead of weeks. Bad security architecture surfaces in breach reports that create immediate forcing functions for accountability. Bad epistemic governance in foundational strategic assessments hides inside multi-year transformation programs where it compounds through every subsequent decision until the accumulated analytical errors become visible as destroyed enterprise value that nobody can trace back to the initial assessment work that set everything on the wrong trajectory.

Think about what happens when you build data strategy recommendations on stakeholder interviews where different executives trust different data sources selectively based on which reports support their existing positions, and your framework provides no mechanism for resolving those conflicts beyond documenting that perspectives vary. The technical work proceeds exactly as planned, but your entire strategic foundation rests on politically contested epistemological ground that will collapse the moment implementation requires someone to definitively lose an argument about whose version of reality governs investment decisions.

Six Controls That Make the Work Harder On Purpose

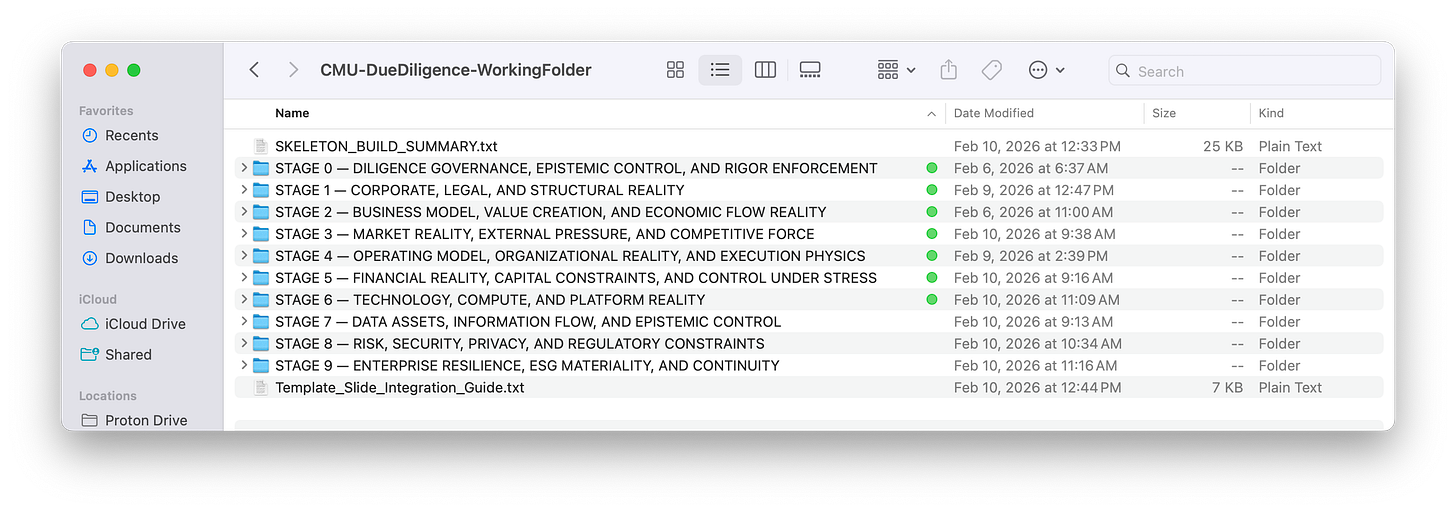

Before we touched company research or begins competitive analysis, i proposed to build a Stage 0—a constitutional layer that locks in before our substantive work began. Six controls that make our work measurably harder, because difficulty is how you know constitutional governance is actually functioning. These six controls are laid out directly below:

0.1 Strategic intent lock declares what our analysis is permitted to conclude and what it is forbidden to do. Company assessment only—no strategy recommendations embedded in findings, no transformation roadmaps implied through selective emphasis. If readers could interpret our findings as containing implicit recommendations, the lock failed.

ROLE:

You are acting as the Diligence Director (authoritative).

MISSION (NON-NEGOTIABLE):

Define what this diligence is allowed to decide — and what it is explicitly forbidden to do.

This exists to prevent strategy creep, solution bias, and retrofitted conclusions.

ANALYTICAL BURDEN:

You MUST:

• Declare the exact decision classes this diligence supports

• Explicitly prohibit strategy design, roadmaps, and recommendations

• Lock the lens to discovery, not prescription

• Define the consumer(s) of truth (e.g., IC, board, CDAIO, acquirer)

MANDATORY ADVERSARIAL TESTS:

You MUST explicitly answer:

• What would constitute misuse of these outputs?

• What decisions should NOT be made from this work?

• What incentives exist to overstep these boundaries?

• Who benefits if these boundaries blur?0.2 Evidence hierarchy defines what constitutes legitimate evidence and how we resolve conflicts. SEC filings outrank earnings calls because filings carry legal liability. Audited financials outrank investor presentations because one has independent verification. Every material assertion traces to source documents through transparent lineage.

ROLE:

You are acting as the Evidence & Methodology Authority.

MISSION:

Define what counts as evidence, how it is weighted, and how conflicts are resolved.

This is the line between diligence and opinion.

ANALYTICAL BURDEN:

You MUST:

• Define primary vs secondary vs inferential evidence

• Establish a trust hierarchy:

REGULATORY > AUDITED > CONTRACTUAL > ISSUER > THIRD-PARTY > MEDIA

• Define how stale, contradictory, or partial evidence is handled

• Define minimum citation requirements per claim type

MANDATORY ADVERSARIAL TESTS:

You MUST explicitly answer:

• What evidence would NOT be sufficient?

• When is inference allowed — and when is it forbidden?

• How are analyst echo chambers avoided?

• How is availability bias prevented?0.3 Mandatory falsification requires documented searches for disconfirming evidence before we claim any hypothesis has support. The most valuable finding is often we expected X but evidence indicates not-X, except institutional patterns reward confidence so contradictory evidence gets buried as areas requiring additional investigation.

ROLE:

You are acting as the Red Team Lead.

MISSION:

Ensure every major line of inquiry attempts to prove itself wrong.

No hypothesis survives unchallenged.

ANALYTICAL BURDEN:

You MUST:

• Require explicit hypotheses for each stage

• Mandate disconfirming evidence searches

• Track hypothesis survival or collapse

• Prevent hypothesis drift into assumption

MANDATORY ADVERSARIAL TESTS:

You MUST explicitly answer:

• What would disprove each hypothesis?

• What evidence was sought but not found?

• Where did confidence decrease?

• Which hypotheses collapsed — and why?0.4 Uncertainty preservation distinguishes between things not yet verified and things that cannot be verified with available information. We attach confidence levels to every significant claim. When stakeholder interviews contradict financial filings, we document that contradiction rather than averaging it into comfortable middle positions that obscure the fact we cannot determine ground truth.

ROLE:

You are acting as the Epistemic Risk Analyst.

MISSION:

Prevent uncertainty from being smoothed, hidden, or laundered.

Uncertainty must be classified, bounded, and preserved.

ANALYTICAL BURDEN:

You MUST:

• Distinguish UNKNOWN from UNKNOWABLE

• Require confidence levels on all claims

• Prevent aggregation from erasing variance

• Force explicit statements of confidence decay

MANDATORY ADVERSARIAL TESTS:

You MUST explicitly answer:

• What do we not know?

• What cannot be known from public sources?

• Where does uncertainty materially affect decisions?

• Where would false certainty be dangerous?0.5 Independent enforcement establishes red-team review where at least one team member challenges every major finding with authority to force complete rework regardless of schedule pressure. Without this, all other controls become performance theater.

ROLE:

You are acting as the Independent QA & Red Team Authority.

MISSION:

Ensure no stage self-certifies rigor.

All stages must be challengeable, reversible, and auditable.

ANALYTICAL BURDEN:

You MUST:

• Define cross-stage QA checks

• Require red-team review for synthesis stages

• Establish fail / redo authority

• Prevent schedule or convenience pressure from overriding rigor

MANDATORY ADVERSARIAL TESTS:

You MUST explicitly answer:

• Who can force a redo?

• What triggers escalation?

• How are weak stages detected?

• How is dissent preserved?0.6 Output integrity structures our deliverable so uncertainty bounds cannot be stripped out by readers who only engage with executive summaries. Confidence qualifiers embed directly with claims rather than living in footnotes that disappear during excerpting.

ROLE:

You are acting as the Output Integrity & Risk Analyst.

MISSION:

Ensure outputs cannot be stripped of context, caveats, or uncertainty.

This exists to prevent downstream misuse.

ANALYTICAL BURDEN:

You MUST:

• Require claim → evidence traceability

• Embed caveats and confidence with outputs

• Prevent selective excerpting

• Define how summaries must reference full analysis

MANDATORY ADVERSARIAL TESTS:

You MUST explicitly answer:

• How could these outputs be misused?

• How is overconfidence prevented?

• What context must never be removed?

• What warnings must travel with outputs?These controls make our deliverable harder to produce. That difficulty is the entire point of constitutional governance. I provide the prompt in its entirety at the end of the article.

Why 73% of AI Initiatives Collapse Before Models Run

The conventional wisdom about AI failure is well-documented and widely accepted across industry research.

MIT’s 2025 study shows ninety-five percent of enterprise AI pilots deliver zero measurable return, S&P Global Market Intelligence reports forty-two percent of companies abandoned most AI initiatives in 2025 up from seventeen percent the year before, and Informatica’s CDO Insights survey identifies the primary culprits as data quality and readiness at forty-three percent, lack of technical maturity at forty-three percent, and shortage of skills at thirty-five percent.

The diagnosis has become remarkably consistent: fix data quality through governance, align stakeholders through communication, clarify use cases through business discipline, and invest in organizational capabilities through training. To be clear, I completely agree that data quality must be paramount.

I have watched organizations follow that exact playbook, spending millions on data quality initiatives and governance frameworks. Has the failure rate stayed constant because the conventional diagnosis treats symptoms as root causes? Let’s see.

Here is what the industry analysis consistently misses: data quality problems are real, but organizations discover them only after committing strategic direction based on foundational assessments that mistook management narrative for enterprise reality. Read that again. They then launch expensive governance initiatives to fix quality issues in systems they should never have selected, for use cases they never properly validated, supporting business models they never stress-tested against contradictory evidence that was available during assessment.

I use CISO disciplines to protect the enterprise and I am building CDAIO capabilities to drive enterprise growth, and that combination is unusual enough that it changes what you can see when you look at organizational failures and where you have leverage to force change before patterns become irreversible. Both roles operate in domains where failure stays completely invisible until it becomes catastrophic enough to force response, where truth is frequently politically inconvenient in ways that create real career risk for people who insist on it. Where institutional incentives systematically reward narrative comfort over analytical accuracy because comfortable narratives enable action while uncomfortable truths force confrontation with constraints leadership would prefer to ignore.

The difference between these domains is timing rather than fundamental challenge. Security failures surface on attacker timelines you cannot control or negotiate—hours or days or weeks that force immediate accountability because external threat actors do not wait for your strategic planning cycle to complete. Bad epistemic governance in foundational strategic assessments hides inside multi-year transformation programs where compounding analytical errors take quarters or years to become visible as destroyed enterprise value that nobody can trace definitively back to the initial assessment work that set everything on the wrong trajectory at the very beginning when course correction would have been relatively inexpensive.

Organizations do not fail at AI implementation because they chose the wrong technology platforms or because their data quality turned out to be insufficient for the models they wanted to deploy. They fail because their foundational assessments—the exact work this first deliverable requires us to produce—systematically mistake management narrative for enterprise reality, and every subsequent recommendation inherits that distortion, compounds it through additional layers of analysis that treat the initial assessment as settled truth, and amplifies it into strategic direction that destroys value at scale. The data quality issues surface later as symptoms because the foundational assessment never properly tested whether proposed use cases could actually be supported by available data, whether the business model is economically viable when you account for actual unit economics, or whether stakeholders claiming alignment actually agree on what constitutes authoritative information when positions conflict during implementation.

My belief is that you cannot fix this pattern by executing harder or by investing more capital in data quality remediation after flawed strategic direction has already been set. The failure is architectural and requires constitutional governance operating at the assessment design level, before anyone commits resources to directions that were never properly validated against evidence.

Nine Stages of Constraint Discovery

This starts a series walking through nine stages of progressive constraint discovery, each designed to surface truths that standard assessments routinely smooth away. Corporate structure and legal constraints. Business model mechanics and value flows. Market forces and competitive dynamics. Organizational execution reality. Financial behavior under stress. Technology constraints and platform dependencies. Information flows and epistemic authority. Risk boundaries and regulatory constraints. Resilience under shock.

The goal is never comprehensive analysis—that is a comfortable fiction. The goal is accurate discovery of what actually constrains outcomes, preserved with uncertainty bounds intact, structured to resist reinterpretation by people who never read the underlying evidence.

Next piece covers Stage 1 and why standard assessments systematically confuse organizational charts with actual power structures, why legal entities map poorly to operational reality, and why formal corporate structure tells you almost nothing about where authority actually lives in practice. Cheers!